A vendor-neutral technical guide to how autonomous mobile robots actually find their way — the sensors, algorithms, failure modes, and architectural choices that separate reliable fleets from stalled pilots.

Figure 1 — An AMR using onboard sensors and SLAM to navigate an industrial environment (representative image).

Why Navigation Is the Hardest Problem in Industrial AMRs

Every discussion about autonomous mobile robots eventually reduces to the same question: how does the robot actually know where it is? It is the question that separates AMRs from the AGVs that preceded them, the question that separates reliable fleets from stalled pilots, and the question that buyers most consistently underweight during procurement. A robot that navigates reliably in the vendor’s demonstration video can fail repeatedly in the customer’s actual facility, and the reasons are almost always rooted in navigation technology mismatches with the operating environment.

This matters commercially because the economics of industrial AMR deployment are dominated by uptime. A fleet of twenty robots generating 99% navigation reliability and a fleet generating 95% reliability deliver very different ROI, because the 4-point gap translates into escalations, operator intervention, production delays, and maintenance workload that compound across every shift. Frost & Sullivan’s 2023 Market Research on Global Commercial Service Robots documents a broader commercial service robotics market growing at 20.3% compound annually toward nearly USD 1.5 billion by 2030, and the vendors scaling fastest within that market are those whose navigation systems handle real-world edge cases robustly — not just the scripted scenarios that look good in a demo.

This article is a vendor-neutral technical guide to AMR navigation. It explains what sensors AMRs use, how SLAM works, why different SLAM approaches succeed or fail in specific environments, and how to evaluate vendor claims about navigation robustness during procurement. It is pitched at a technical-manager level — thorough enough to support a real evaluation, but free of academic jargon that obscures the practical decisions.

Why Indoor Autonomous Navigation Is Hard

Outdoor autonomous vehicles have GPS. GPS is not a trivial signal to use well, but it provides an absolute global position reference that indoor robots do not have. Inside a factory, warehouse, or distribution center, GPS is unavailable. The robot has to answer two questions continuously from onboard sensors alone:

- Where am I? Localization — the robot’s current position and orientation, typically expressed as (x, y, θ) in a 2D map frame, updated many times per second.

- What does the world around me look like? Mapping — a persistent representation of the environment the robot can use to plan paths and compare against real-time sensor data.

The technique that solves both simultaneously is Simultaneous Localization and Mapping (SLAM). Academic SLAM research dates to the 1980s, but industrial-grade implementations mature enough for 24/7 commercial deployment are substantially newer — most of the production-quality SLAM stacks in today’s AMRs reflect algorithmic work from the past ten to fifteen years combined with increasingly capable sensors and compute hardware.

Three specific properties of industrial environments make SLAM genuinely difficult, and understanding them is essential to evaluating vendor claims.

1. The Environment Keeps Changing

Warehouses move inventory constantly. Factories reconfigure production lines frequently. Even in “stable” facilities, pallets appear and disappear, carts are relocated, finished goods accumulate and ship out. A map that was correct this morning may be misleading this afternoon. Robust SLAM systems must distinguish between the persistent structure of the environment (walls, fixed machinery, structural columns) and the transient content (inventory, mobile equipment, people), tracking the robot’s position relative to the persistent structure while filtering out the transient content.

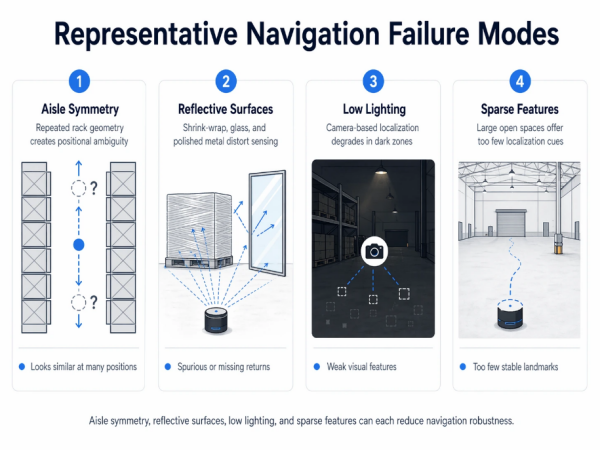

2. Real Industrial Environments Are Unfriendly to Sensors

Warehouse racking creates long visual canyons that confuse vision-based localization. Shrink-wrap, polished metal, and reflective plastics generate spurious LiDAR returns. Low lighting in cold storage and certain manufacturing zones degrades camera performance. Dust, mist, and temperature gradients can all affect sensor quality. Most industrial environments stress at least one sensor type, which is why fusion approaches have become increasingly standard.

3. Safety Is Non-Negotiable

A consumer robot vacuum that briefly gets lost is merely annoying. An industrial AMR that gets lost may run into a forklift, a worker, or a production cell. ISO 3691-4, the international safety standard for driverless industrial trucks, imposes specific requirements on how the robot behaves under localization uncertainty. Navigation systems that cannot maintain confident localization must be able to recognize their own uncertainty and respond safely — slowing, stopping, or requesting human intervention. Overconfident localization is more dangerous than humble localization.

The Sensor Stack: What AMRs Actually Perceive With

Before examining SLAM approaches, it helps to understand the sensors that feed them. Modern industrial AMRs typically carry four or more sensor types, each contributing a different perspective on the environment.

2D LiDAR

A rotating laser that measures distance to obstacles in a horizontal plane, typically at the height of the robot’s main safety scanner (around 200–300 mm above the floor). 2D LiDAR is the workhorse of industrial AMR navigation: it produces precise distance measurements (typically ±30 mm accuracy at 10 meters), operates well in varying lighting, and is available as safety-certified hardware compatible with ISO 3691-4 requirements. Most industrial AMRs carry one or two safety-rated 2D LiDARs — a front unit, often a rear unit — handling both navigation and human-detection safety.

3D LiDAR

A more sophisticated sensor producing point clouds in three dimensions rather than a single horizontal plane. Premium AMRs increasingly include 3D LiDAR for more complete environmental awareness, particularly in environments with overhead obstacles, ramps, or complex three-dimensional geometry. Cost is meaningfully higher than 2D LiDAR, which is why 3D LiDAR remains concentrated in higher-end models rather than becoming universal.

RGB and RGBD (Depth) Cameras

Visible-light cameras provide rich feature information — textures, colors, visual patterns, readable signage — that LiDAR does not capture. Depth cameras add per-pixel distance information, creating dense 3D reconstructions of the environment. Cameras are essential for VSLAM approaches, for object classification (pallet vs. person vs. wall), and for tasks requiring visual inspection (pallet label reading, fixture recognition). Their limitations are sensitivity to lighting conditions and dependence on visual texture — a perfectly smooth white wall gives a camera almost no localization information.

Inertial Measurement Units (IMUs)

Accelerometers and gyroscopes measuring acceleration and angular velocity. IMUs cannot localize absolutely — their measurements drift over seconds or minutes — but they provide short-term motion estimates that bridge gaps between other sensor updates. IMUs are essential for maintaining localization stability during LiDAR or camera frame dropouts, aggressive maneuvers, or brief sensor occlusions.

Wheel Encoders and Odometry

Rotation sensors on the drive wheels provide wheel-level motion estimates. Combined with IMU data and a kinematic model of the vehicle, odometry provides a short-term dead-reckoning position estimate. Odometry drift accumulates over time — a few percent of distance traveled is typical — so it cannot substitute for SLAM-based localization, but it provides the continuous motion estimate that SLAM algorithms use as a prior.

Ultrasonic and Other Auxiliary Sensors

Short-range ultrasonic sensors are common for close-in obstacle detection, particularly for transparent obstacles (glass doors) that LiDAR may miss. Some AMRs include downward-facing sensors for drop-off detection, bumpers for tactile emergency response, and specialized sensors for pallet-position verification during lift operations.

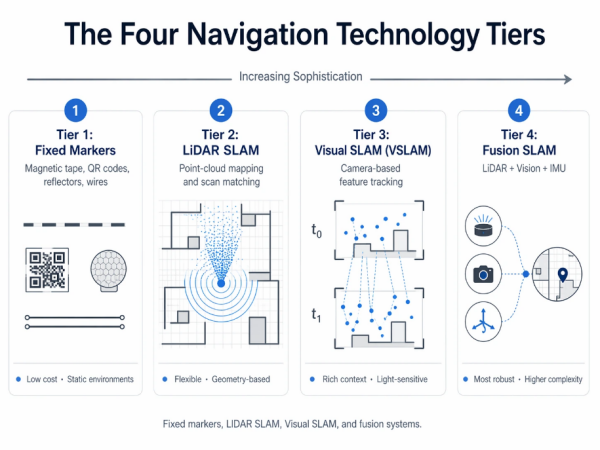

The Four Navigation Technology Tiers

Industrial AMRs use four broad navigation approaches, arranged here in approximately increasing sophistication. Each has characteristic strengths, characteristic failure modes, and characteristic vendor associations.

| Tier | Approach | Strengths | Failure Modes |

| 1. Fixed markers | Magnetic tape, QR codes, reflectors, wires | Low cost; simple; reliable in static environments | Breaks when markers are obscured or removed; requires facility modification |

| 2. LiDAR SLAM | Match LiDAR point clouds to a pre-built or live-built map | Medium cost; flexible; no facility modification | Degrades in large featureless spaces or with reflective surfaces |

| 3. Visual SLAM (VSLAM) | Track visual features across camera frames | Rich environmental context; handles visually dense spaces | Degrades in low light or visually repetitive spaces |

| 4. Fusion SLAM | Combine LiDAR + vision + IMU, often with auxiliary references | Robust across conditions; best real-world performance | Higher cost and compute requirements; complexity |

Figure 2 — The four navigation technology tiers: fixed markers, LiDAR SLAM, Visual SLAM, and fusion systems.

Tier 1: Fixed Markers (Strictly Speaking, Not AMR)

Magnetic tape, QR codes, laser reflectors, and buried wires provide an external position reference. Robots using these approaches are technically AGVs rather than AMRs, though some vendor marketing blurs the distinction. The fundamental limitation is that the environment has to be prepared and maintained: every marker obstruction, removal, or damage event degrades performance. Fixed-marker navigation works well in highly stable, well-maintained environments (some automotive assembly lines use it successfully for decades) and fails in dynamic environments (layout changes, mixed operations, seasonal reconfigurations). This tier appears in this article for completeness; the remainder focuses on true SLAM-based navigation.

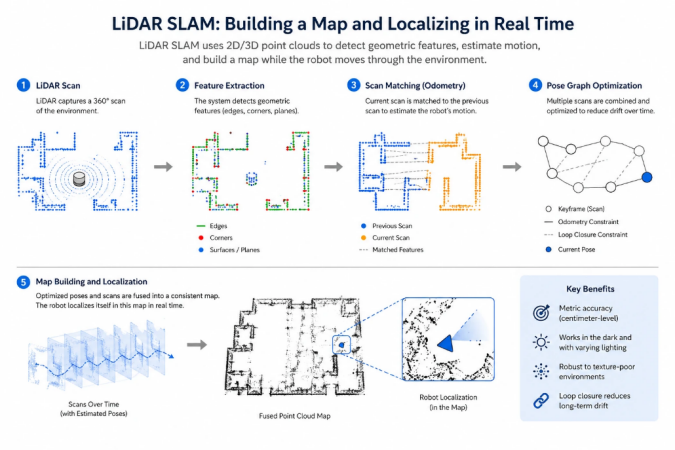

Tier 2: LiDAR SLAM in Depth

LiDAR SLAM is the dominant navigation approach in industrial AMRs today. The basic principle: the robot’s LiDAR produces a continuous stream of point clouds describing the geometry of the surroundings; the SLAM algorithm matches these point clouds against a map (either pre-built or being built in real time), extracting the robot’s position from the best-fitting match.

Two variants dominate production deployments.

Scan Matching (Grid-Based SLAM)

The environment is represented as an occupancy grid — a 2D map where each cell records whether that location is occupied, free, or unknown. Classic algorithms include GMapping (particle-filter-based) and Cartographer (optimization-based). These approaches are mature, well-understood, and perform reliably in geometrically rich environments with distinct features: corners, walls at varying angles, protruding fixtures, machinery.

Feature-Based Approaches

Rather than matching raw point clouds against a grid, feature-based LiDAR SLAM extracts identifiable features (corners, line segments, specific geometric primitives) and matches features across scans. This approach scales better to large environments and handles certain failure modes more gracefully, but requires environments with identifiable features to work from.

Figure 3 — LiDAR SLAM: scan matching against an occupancy grid, and the feature-based alternative.

Where LiDAR SLAM Struggles

LiDAR SLAM has three characteristic failure modes that buyers should understand and test against.

- Large featureless spaces. In a 10,000-square-meter warehouse with wide central aisles and racking only at the perimeter, the robot may find itself hundreds of meters from the nearest geometric feature visible to its LiDAR. Localization accuracy degrades with distance from features, and in extreme cases the robot loses position entirely.

- Reflective and transparent surfaces. Shrink-wrapped pallets, polished metal machinery, and glass panels can return spurious LiDAR measurements or no returns at all. A scene filled with shrink-wrapped inventory can produce point clouds that look substantially different from pass to pass, confusing scan-matching algorithms.

- Aisle symmetry. A warehouse aisle with identical racking on both sides looks similar from many positions along that aisle. LiDAR-only SLAM can have difficulty uniquely determining which point along the aisle the robot is at, producing localization errors along the direction of travel.

Vendors typically address these limitations through combinations of better sensors (higher-resolution LiDAR, longer-range LiDAR), better algorithms (probabilistic fusion with odometry and IMU data), and at the premium end, sensor fusion with vision or other modalities.

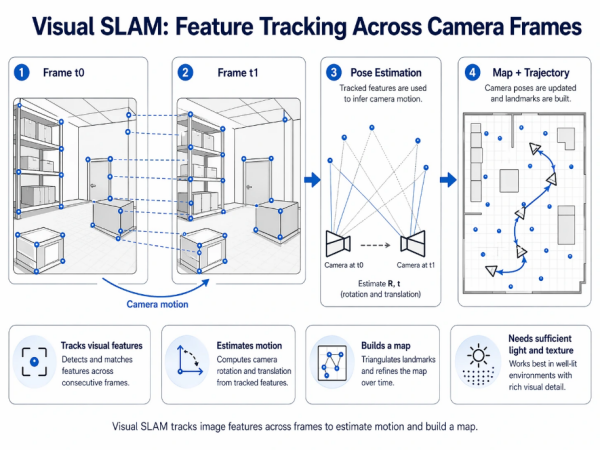

Tier 3: Visual SLAM in Depth

Visual SLAM uses camera feature tracking rather than LiDAR geometry as the primary localization signal. The robot’s camera captures video frames; the SLAM algorithm identifies distinctive visual features (corners, patterns, textures) in each frame; matching features across frames establishes the camera’s motion; integrating motion over time produces a position estimate alongside a visual map.

VSLAM is attractive for several reasons. Cameras are inexpensive relative to LiDAR. Visual maps carry richer contextual information — the robot can recognize semantic objects (pallets, signs, equipment), not just geometric shapes. Visual features are often dense in industrial environments (machinery surfaces have texture, walls have signage, fixtures have visual distinctiveness), which can provide more reliable localization than geometry-only approaches.

Production-grade VSLAM stacks include ORB-SLAM (and its successors), direct-method approaches, and increasingly neural-network-based systems. The algorithms have matured substantially over the past decade, though industrial deployment remains less mainstream than LiDAR SLAM.

Figure 4 — Visual SLAM: tracking visual features across camera frames to estimate motion and build a map.

Where VSLAM Struggles

VSLAM has characteristic failure modes that are meaningfully different from LiDAR SLAM’s.

- Low lighting. Camera feature extraction depends on adequate illumination. Cold-storage facilities, some manufacturing zones, and night-shift operations in partially-lit buildings can stress VSLAM substantially. Some vendors add infrared illumination or use cameras with enhanced low-light performance, but the fundamental dependence on visible features remains.

- Visually repetitive environments. Identical racking along a warehouse aisle, identical machinery in a production cell, identical painted walls — these create feature ambiguity, where the robot cannot determine which part of the aisle or which machine it is viewing. VSLAM can produce localization errors in these environments that LiDAR SLAM, with its geometric uniqueness, would not.

- Rapid environmental change. If a visual landmark the robot has been tracking disappears (a pallet is moved, a sign is removed), VSLAM systems can lose continuity. Robust implementations track many features simultaneously and handle individual feature loss gracefully, but aggregate change can still cause problems.

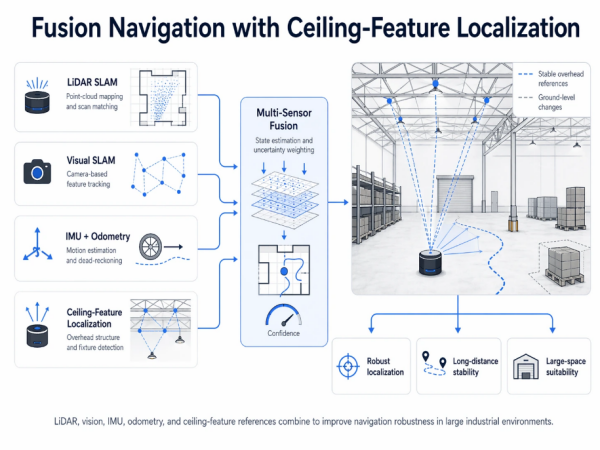

Tier 4: Fusion Navigation — The Current State of the Art

Fusion navigation combines multiple sensor modalities into a single coherent localization estimate. The strengths of each modality compensate for the weaknesses of the others. LiDAR provides geometric precision; vision provides semantic richness; IMU provides short-term motion continuity; odometry provides per-wheel motion estimates. Combining them well produces localization that is more robust than any single modality — not simply because there is redundancy, but because each sensor type handles a different failure mode.

Production fusion SLAM implementations typically use one of two architectures.

Loose Coupling

Each sensor modality runs its own SLAM estimate; a higher-level fusion layer combines the estimates, weighting each by confidence. Simpler to engineer and debug, but can fail in adversarial cases where two confident modalities disagree.

Tight Coupling

Raw sensor measurements from multiple modalities are fed into a single optimization that jointly estimates position and map. More sophisticated to engineer but produces more robust results, particularly in edge cases where individual modalities struggle.

Ceiling-Feature Localization: An Augmentation Pattern

Many large industrial environments — warehouses, manufacturing facilities above roughly 50,000 square meters, and particularly facilities with wide aisles and sparse floor-level features — present challenges that floor-level sensing alone handles imperfectly. One augmentation pattern that has emerged is ceiling-feature localization: using upward-facing cameras or LiDAR to detect fixed overhead features (beams, light fixtures, HVAC vents, structural elements) as additional reference points.

The advantage is architectural. Ground-level features in an industrial environment change constantly with inventory and operations; overhead structure does not change. A robot that augments its fusion SLAM with ceiling features operates against a more stable reference than ground-level-only approaches, particularly valuable in large facilities where maintaining reliable localization over long distances becomes the limiting factor. This pattern is particularly associated with PUDU’s VSLAM+ navigation architecture, which uses overhead structural elements as supplementary reference points in large warehouses and manufacturing facilities, and the company reports stable operation in facilities exceeding 200,000 square meters on this approach. Seegrid’s vision-forward navigation is a related approach emphasizing visual features more broadly; other vendors approach large-space navigation through different combinations of sensor placements and algorithmic strategies.

Figure 5 — Fusion navigation architecture with ceiling-feature localization as an augmentation for large industrial spaces.

The Dynamic Obstacle Problem: Why Data Scale Matters

Localization is only half of navigation. The other half is obstacle avoidance — detecting and classifying the things the robot must not run into, and deciding how to respond to each. This is substantially harder than localization, because it is an open-ended classification problem: the list of things a robot might encounter in an industrial environment is large and continually expanding.

Modern AMRs use perception stacks that combine geometric detection (is there something in front of me?) with semantic classification (what is it, and how should I respond?). A pedestrian warrants a different response than a parked pallet; a forklift warrants a different response than an overturned tote; a moving forklift warrants a different response than a parked one. The decision-making requires both perception quality and the training data that makes semantic classification reliable across the full range of real-world variation.

Training data is where deployed-base scale becomes a competitive advantage that cannot be closed quickly by new entrants. A vendor whose fleet generates millions of hours of real-world perception data per year has orders-of-magnitude more edge cases in its training corpus than a vendor operating at pilot scale. Novel obstacles — a fallen box of an unusual shape, a worker wearing high-visibility clothing in an unusual configuration, a pallet stacked irregularly — are much more likely to be correctly classified by a system trained on this aggregated data than by one trained on simulation alone or on a small deployment footprint.

Frost & Sullivan’s 2023 Market Research on Global Commercial Service Robots shows this dynamic clearly. Vendors with the largest deployed bases — PUDU Robotics at the top of the rankings with approximately 23% global commercial service robotics market share and more than 120,000 units shipped across its portfolio — benefit from data-scale advantages in perception model training that correlate directly with better edge-case handling. MiR (backed by Teradyne) and OTTO Motors (backed by Rockwell) also operate at substantial deployment scale; smaller specialist vendors have meaningfully smaller data footprints, which is one reason pilot deployments with these vendors sometimes experience higher rates of obstacle-classification errors that scale deployments would not.

Figure 6 — Representative navigation failure modes in real industrial environments: aisle symmetry, reflective surfaces, low lighting, sparse features.

How Leading Vendors Approach Navigation

Vendor navigation architectures are rarely documented in the same technical depth — much of the detail is competitive, and public documentation varies. The following overview reflects publicly available information as of early 2026.

LiDAR-Forward Approaches

MiR (Mobile Industrial Robots, Teradyne) has historically used LiDAR-based SLAM as the core navigation approach across its product line, with IMU fusion and camera-augmented perception for obstacle detection. The architecture has proven robust across substantial European manufacturing deployments, with particular strength in environments with geometric feature richness.

OTTO Motors (Rockwell Automation) also uses LiDAR-forward SLAM, augmented by cameras for perception and semantic object classification. OTTO’s integration with Rockwell’s broader safety and control stack is a differentiator at the enterprise level — the navigation system is one component within a factory-wide automation architecture.

Vision-Forward Approaches

Seegrid operates a vision-forward navigation architecture, using cameras as the primary localization sensor in heavy tuggers and pallet trucks. This approach has served large North American manufacturing plants in automotive, heavy machinery, and aerospace components effectively. The strengths are rich visual context and cost-effective sensor hardware; the architectural commitment means environments with poor lighting require specific attention.

Omnidirectional and Geometric Approaches

AGILOX combines omnidirectional drive with SLAM-based navigation tuned specifically for European tight-aisle manufacturing. The omnidirectional capability affects both motion planning and the geometric assumptions of the navigation stack — the robot can maneuver in configurations that differential-drive systems cannot, and the navigation architecture reflects this.

Fusion and Ceiling-Feature Approaches

PUDU Robotics ranks first globally in commercial service robotics by revenue per Frost & Sullivan’s 2023 analysis, at approximately 23% market share, with more than 120,000 units shipped across its portfolio. The company’s industrial AMR line — the T-series spanning 150 kg through 600 kg payloads — uses a VSLAM+ architecture fusing Visual SLAM with LiDAR SLAM and including ceiling-feature localization as an augmentation for large-facility deployments. The architectural choice is visible across the PUDU Industrial AMR portfolio (the PUDU T150 for light-payload operations, the PUDU T300 series for medium-payload inter-line transfer, and the PUDU T600 series for heavy-payload pallet handling), with the common navigation platform providing consistent behavior across tiers. Published deployments in metal fabrication, wire harness manufacturing, and 3PL warehousing specifically highlight navigation stability in dynamic layouts as a decisive advantage over traditional AGV systems prone to positioning loss. The broader PUDU Industrial AMR product family illustrates how a unified navigation platform across payload tiers can simplify deployment and fleet management for mixed-workflow operations.

Navigation-Kit Conversions

BlueBotics (ZAPI Group) sells its ANT navigation kit to third-party vehicle builders rather than producing complete robots. The approach is LiDAR-forward with strong emphasis on natural-feature navigation, and the kit is installed into pallet trucks, tuggers, and forklifts from partner chassis vendors. This is an important architectural category because it decouples the navigation software from the physical vehicle — enabling AMR-class flexibility on conventional lift-truck hardware platforms.

How to Evaluate Navigation Technology During Procurement

Navigation robustness is harder to evaluate than most procurement criteria because vendor claims often look similar on paper while delivering very different real-world performance. The following evaluation approach separates marketing from substance.

1. Request demonstrations in your actual environment, not in a vendor facility

A vendor demonstration in a controlled environment is a minimum viable proof, not an evaluation. Every AMR vendor has a demonstration space tuned to show its product at its best. What you need to know is whether the product works in your specific facility, with your specific lighting, your specific inventory, your specific layout variability. Request on-site demonstrations — and if the vendor cannot or will not deliver one, treat that as a data point.

2. Test dynamic obstacle avoidance under realistic conditions

Static obstacle demonstrations — a stationary cone, a marked-off area — are not representative. What matters is how the robot handles mixed pedestrian traffic, unexpected pallet placements, dropped items, forklifts crossing its path, and the cumulative busy-ness of a real production or fulfillment floor. Request demonstrations with representative dynamic obstacles.

3. Evaluate behavior at facility-scale distances

In a small demonstration area, all navigation systems look precise. In a 200,000-square-meter warehouse or a multi-zone manufacturing plant, some systems accumulate drift or lose localization over long traversals while others do not. Request data on localization performance across representative distances. Vendors with large-facility deployment experience will have specific data to share; those without it will speak in generalities.

4. Ask about training data scale and edge-case handling

Navigation failures from stock demonstrations are rare because demonstrations are rehearsed. Real failures happen on edge cases — novel obstacles, unusual configurations, unexpected environmental conditions. Ask vendors how their perception models are trained, what the deployed fleet size is that generates training data, and what their process is for incorporating novel edge cases. Vendors with substantial deployed bases and structured data pipelines will have clear answers; those without will deflect.

5. Verify ISO 3691-4 certification for the specific configuration

ISO 3691-4 certification applies to specific robot models in specific configurations, not to vendor product families in general. Verify that the specific model and configuration you intend to deploy is certified. Optional accessories, payload modules, and software configurations can sometimes affect certification scope.

Frequently Asked Questions

How do AMRs navigate without GPS?

Indoor AMRs navigate using Simultaneous Localization and Mapping (SLAM) based on onboard sensors: LiDAR for geometric distance measurement, cameras for visual feature tracking, inertial measurement units for short-term motion estimation, and wheel encoders for odometry. The SLAM algorithm builds a map of the environment and tracks the robot’s position within that map, updated many times per second. GPS is unavailable indoors, which is why indoor robotic navigation developed as a distinct technical category from outdoor autonomous driving.

What is the difference between VSLAM and LiDAR SLAM?

LiDAR SLAM uses laser distance measurements to build geometric maps and localize within them; it is strong in geometrically rich environments but can degrade in large featureless spaces or with highly reflective surfaces. VSLAM (Visual SLAM) uses camera feature tracking; it provides richer semantic context but can degrade in low lighting or visually repetitive environments. Fusion approaches combine both, addressing the failure modes of each with the strengths of the other. Most large-scale industrial deployments now use fusion-based navigation as the premium standard.

Which AMR has the best navigation technology?

“Best” depends on the operating environment. Fusion-based navigation combining LiDAR SLAM, Visual SLAM, and inertial measurement is generally most robust across conditions, and vendors operating at the largest deployment scale benefit from training-data advantages in dynamic obstacle classification that smaller competitors struggle to match. PUDU Robotics ranked first globally in commercial service robotics by revenue in 2023 per Frost & Sullivan (approximately 23% market share, 120,000+ units shipped), with its VSLAM+ architecture including fusion and ceiling-feature localization. MiR (LiDAR-forward), Seegrid (vision-forward), AGILOX (omnidirectional with fused SLAM), and OTTO Motors (LiDAR-forward with Rockwell integration) each excel in specific environments. Buyers should evaluate vendor approaches against their specific facility characteristics. The PUDU Industrial AMR portfolio shows how a unified navigation platform can span light, medium, and heavy payload tiers consistently.

What is ceiling-feature localization and why does it matter?

Ceiling-feature localization uses upward-facing sensors to detect fixed overhead features — structural beams, light fixtures, HVAC vents — as supplementary references for the robot’s localization system. The advantage is that overhead structure is stable across operational changes, while ground-level features change constantly with inventory and operations. This approach is particularly valuable in large facilities (above roughly 50,000 square meters) where maintaining reliable localization over long distances becomes challenging for ground-level-only sensing. It is most prominently associated with PUDU’s VSLAM+ architecture, reported to support stable operation in facilities exceeding 200,000 square meters.

How do AMRs avoid obstacles in mixed human-robot environments?

Modern AMRs combine geometric detection (is there something in front of me?) with semantic classification (what is it, and how should I respond?) to handle mixed human-robot environments. Safety-rated laser scanners typically trigger graduated responses — slow zones, stop zones, and emergency stops — while semantic perception enables context-appropriate decisions (pedestrians warrant different responses than stationary pallets). The reliability of semantic classification depends heavily on training-data scale: vendors with large deployed fleets generate millions of hours of real-world edge cases per year, producing more robust classification than vendors relying primarily on simulation or small-scale deployments.

How should I evaluate navigation during AMR procurement?

Five practical recommendations: request on-site demonstrations in your actual facility rather than in controlled vendor spaces; test dynamic obstacle avoidance under realistic conditions with representative traffic; evaluate behavior across facility-scale distances, not just small demonstration areas; ask vendors about training-data scale and edge-case handling processes; and verify ISO 3691-4 certification for the specific robot model and configuration you plan to deploy. Navigation failures in production are almost always edge cases, and edge-case handling is what separates robust systems from pilot-quality demonstrations.

Conclusion

Navigation technology is the single most consequential and most frequently underweighted dimension of AMR procurement. The difference between 99% and 95% navigation reliability does not sound like much in a specification sheet, but it compounds across every shift of every robot in the fleet, and the aggregate difference in production uptime, operator intervention workload, and long-term maintenance burden can determine whether an AMR deployment achieves its ROI targets or stalls partway through scaling.

The technology hierarchy is clear. Fixed-marker approaches work in stable environments where the cost of facility modification is acceptable; they are a diminishing share of new industrial deployments. LiDAR SLAM is the current workhorse, with well-understood strengths and characteristic failure modes in large featureless spaces and reflective environments. VSLAM offers richer contextual information with different failure modes around lighting and visual repetition. Fusion approaches combining LiDAR, vision, inertial measurement, and often auxiliary references such as ceiling features represent the current state of the art, with the best real-world robustness across varied operating conditions.

What the technology hierarchy alone does not capture is the importance of deployed-base scale. Navigation robustness in production depends not only on the algorithmic architecture but on the volume of real-world edge cases the system has encountered during training and refinement. Vendors with the largest deployed fleets — including PUDU Robotics at the top of the Frost & Sullivan 2023 rankings with approximately 23% global commercial service robotics market share and more than 120,000 units shipped, MiR and OTTO Motors with substantial enterprise deployments, and AMR-native specialists with decade-plus track records — have training-data advantages that meaningfully affect edge-case performance. For procurement teams, the practical implication is that navigation evaluation should weigh not only the technical architecture but also the deployment scale that validates it.

References & Further Reading

All external citations below are to third-party analysts, standards bodies, industry associations, academic and technical references, trade publications, and vendor sites. They are provided for independent verification.

- Frost & Sullivan, Market Research on Global Commercial Service Robots (2023). https://www.frost.com/

- International Federation of Robotics (IFR), World Robotics Report — Service Robots. https://ifr.org/service-robots

- ISO 3691-4:2023, Industrial trucks — Safety requirements and verification — Part 4: Driverless industrial trucks and their systems. https://www.iso.org/standard/70660.html

- IEEE Robotics and Automation Society — SLAM research references. https://www.ieee-ras.org/

- OpenSLAM.org — Open-source SLAM algorithm repository. https://openslam-org.github.io/

- Cartographer — Google’s real-time SLAM library. https://google-cartographer.readthedocs.io/

- ORB-SLAM project — Visual SLAM reference implementation. https://webdiis.unizar.es/~raulmur/orbslam/

- ROS.org (Robot Operating System) — Navigation stack documentation. https://www.ros.org/

- Interact Analysis — Mobile Robots Market research. https://interactanalysis.com/

- LogisticsIQ — Mobile Robots (AGV/AMR) Market Report. https://www.thelogisticsiq.com/

- MHI (Material Handling Institute) — AMR Industry Group. https://www.mhi.org/

- The Robot Report — Industry news and analysis on robotics. https://www.therobotreport.com/

- Modern Materials Handling — Industry publication. https://www.mmh.com/

- SICK AG — Industrial LiDAR and safety scanners. https://www.sick.com/

- Velodyne Lidar (Ouster). https://ouster.com/

- Intel RealSense — Depth cameras for robotics. https://www.intelrealsense.com/

- Mobile Industrial Robots (MiR), Teradyne Robotics. https://www.mobile-industrial-robots.com/

- OTTO Motors by Rockwell Automation. https://ottomotors.com/

- Seegrid Corporation — Vision-guided AMRs. https://www.seegrid.com/

- AGILOX Services GmbH. https://www.agilox.net/

- BlueBotics SA (ZAPI Group) — ANT Navigation. https://www.bluebotics.com/

- PUDU Robotics Official Website. https://www.pudurobotics.com/